AWS EC2 T2 instances: 700 seconds of fame

There is no shortage of innovation going on in cloud pricing models, ranging from Google’s preemptible virtual machines to pay-by-the-tick code execution services such as AWS Lambda. The overall trend is that pricing gets ever more granular and complex and this applies both to emerging pay-as-go services as well as to mainstream virtual machines. As IaaS providers get more creative, so are their customers. We’re not far away from the times where capacity owners will be placing and buying back IT capacity just like they manage corporate cash positions today. It’s a world where enterprises will be closing daily books not just in dollars but also in petabytes and GHz.

A small step in this direction is a new utility pricing model implemented by AWS for their T2 virtual machines (aka EC2 instances). These virtual machines have a fixed maximum capacity measured by the number of CPU cores and a variable burst capacity measured in CPU credit units. CPU credits measure the amount of time that the virtual machine is allowed run at its maximum capacity. One CPU credit is equal to 1 virtual CPU running at 100% utilization for 1 minute. If the machine has 60 credits, it can utilize 1 vCPU for 1 hour. If the machine’s CPU credit balance is zero, it will run at what Amazon calls “Base performance”.

The AWS pricing page provides the following parameters for T2 instances as of May 17, 2015:

| Instance type | Initial CPU credit* | CPU credits earned per hour | Base performance (CPU utilization) |

|---|---|---|---|

t2.micro |

30 | 6 | 10% |

t2.small |

30 | 12 | 20% |

t2.medium |

60 | 24 | 40%** |

- T2 instances run on High Frequency Intel Xeon Processors operating at 2.5GHz with Turbo up to 3.3GHz.

The above table means that a t2.small instance can run for 12 minutes each hour at full capacity. If it happens to run out of CPU credits and it is still has some useful work to do, it will run on a CPU share equivalent to 500MHz CPU (2.5Ghz * 20%). Expect delays because that’s not much. To put things in context, Samsung Galaxy S6 phone has 4 cores with 2.1 GHz clock speed each. The mobile phone CPU architecture is no match to Xeon but I couldn’t resist the chance to reference the specifications.

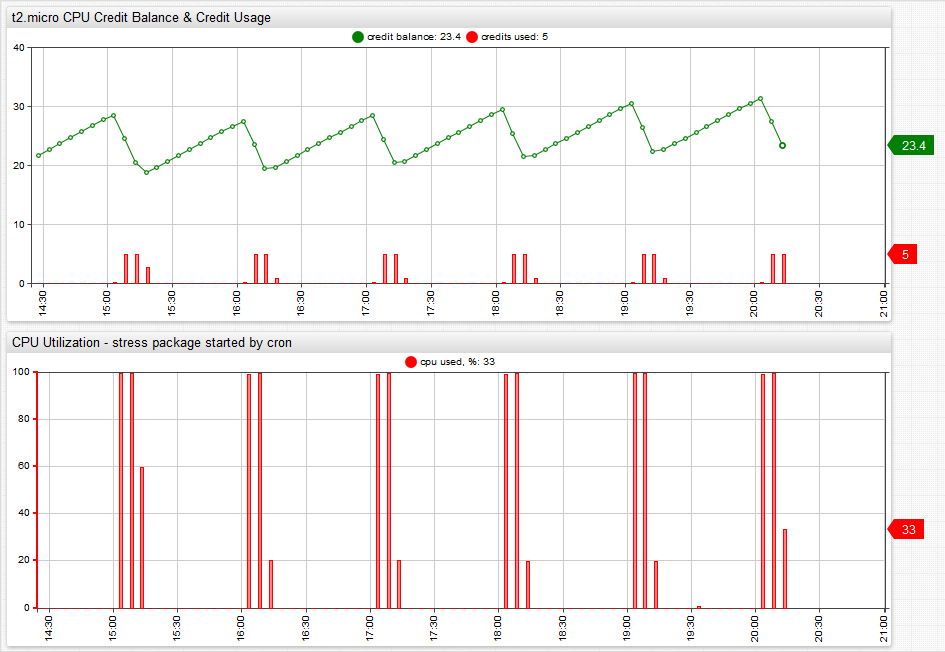

The charts below illustrate the relationship between CPU credit balance, CPU credits and CPU usage.

CPU Usage results in CPU credits drawdown which reduces the CPU credit balance. Idle time frees the CPU and earns CPU credits.

The CPU usage in this particular case is generated with stress package triggered by cron at the beginning of each hour and it runs just enough to maintain CPU credit balance at a constant level throughout the day. There were no applications running on the instance other than services pre-installed on Ubuntu 14.04 server distribution.

The CPU load command looks as follows:

3 * * * * stress –cpu 1 –timeout 700

Those of you with a trained eye for details noticed that the number of seconds that the stress command loads the CPU is 700 seconds. It’s 20 seconds less than the advertized 12 minutes but we’ll assume that this is how much CPU time is consumed by the machine when it’s not CPU stressed.

So what’s the conclusion on T2 instances after all? One way to approach this is to follow Amazon advice that “T2 instances are a good choice for workloads that don’t use the full CPU often or consistently, but occasionally need to burst”. The question is how do you know if T2 is a good choice given your particular application. The solution is monitoring and alerting. Unlike other instances, T2 machines expose CPU credit balance and CPU credit usage metric which you can view in Amazon CloudWatch console or query with AWS CloudWatch API.

You can also create alerts for CPU credit balance to be fired during prime hours in case the system is out of credits.

Last but not least, if you’re running more than a few EC2 instance, you can have Axibase Time-Series Database collect CloudWatch metrics for you so you can make smart capacity planning decisions and forecasting at scale.

Enjoy!